I've always loved Texas Hold'em. Not in the "I've memorized GTO ranges and grind online" way — more in the "I'll never turn down a home game" way. There's something about the mix of incomplete information, reads, and the occasional well-timed bluff that just gets me. It's part math, part psychology, part gut feel. As someone who builds software for a living, Hold'em has always felt like the most interesting unsolved problem sitting right in front of me at the kitchen table.

When I launched AI Labs, I said each project was an excuse to go deep on something new. That's true — but this one was a little different. This wasn't learning a new framework or exploring an unfamiliar pattern. This was taking something I already loved and asking: what happens when I hand the cards to an AI?

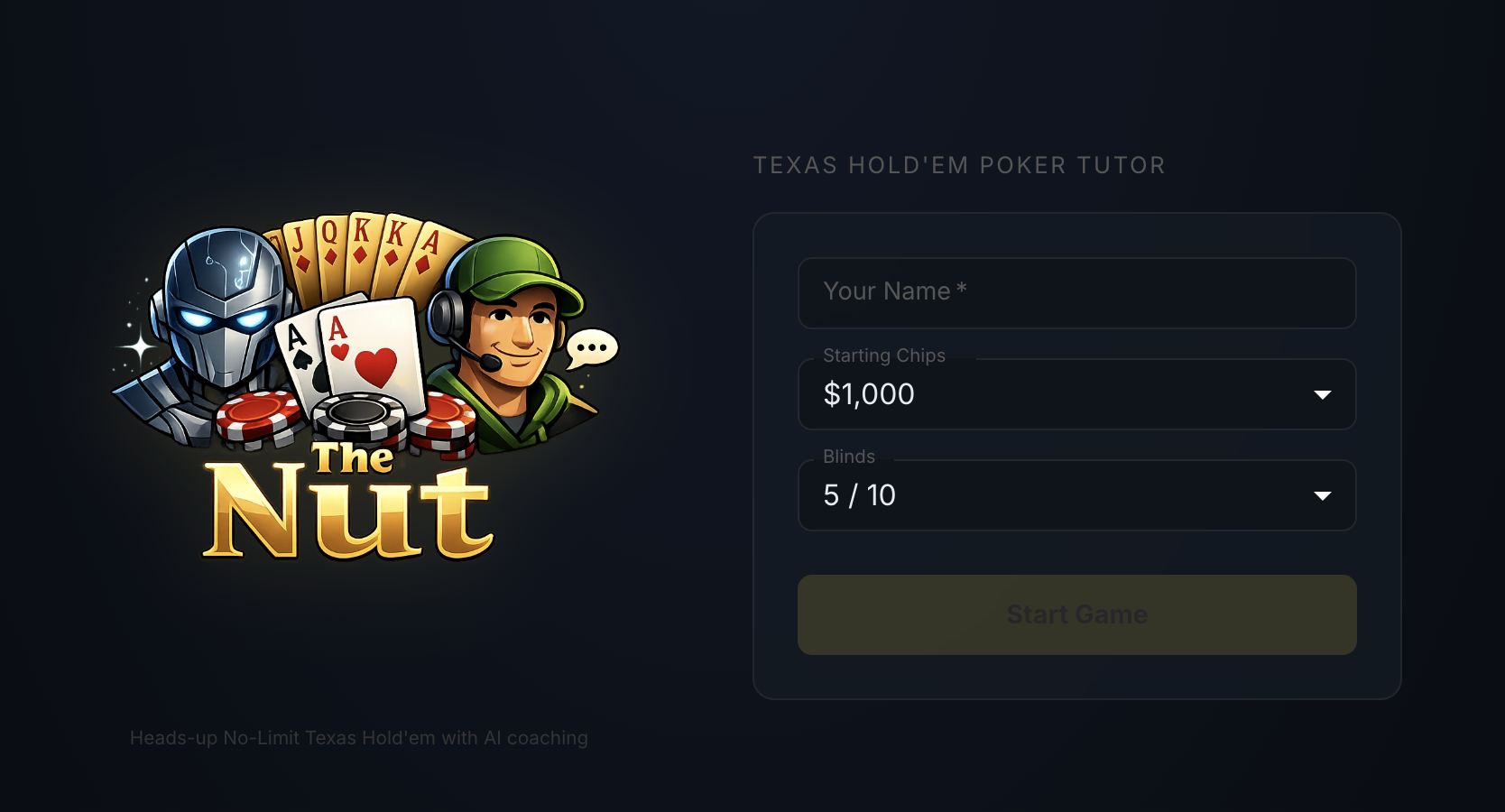

The answer is The Nut — a heads-up Texas Hold'em poker tutor where one AI plays against you and another one coaches you through it.

The concept is straightforward. You sit down at a virtual table, type in your name, set your starting chip stack and blinds, and play heads-up No Limit Hold'em against an AI opponent called The House. At any point during a hand, you can tap a button and ask The Coach for advice — pot odds, hand strength, what it would do in your spot, and why.

The name comes from poker terminology. In Hold'em, "the nuts" refers to the best possible hand given the community cards on the board. If you're holding the nuts, nobody can beat you. It felt like the right name for a tutor that's supposed to help you find the best play.

Under the hood, the app is built with Spring Boot on the backend, React on the frontend, and Vertex AI Gemini 2.0 Flash as the LLM — wired in through Spring AI. The whole thing runs in a single Docker container with the React build baked into the Spring Boot static resources.

The thing that makes this project interesting from an AI engineering perspective isn't that it uses an LLM. It's that it uses two of them, in completely different roles with completely different information.

| The House (Opponent) | The Coach | |

|---|---|---|

| Role | Makes betting decisions against you | Gives you advice when you ask |

| Sees | Its own hole cards + community cards + betting history | Your hole cards + community cards + full game state |

| Personality | Competitive, unpredictable, talks trash | Encouraging, patient, analytical |

| Decision type | Action (fold, call, raise, all-in) | Recommendation (what to do and why) |

| Temperature | 0.8 (creative — keeps you guessing) | 0.4 (deterministic — consistent advice) |

They never talk to each other. The House doesn't know what The Coach told you, and The Coach doesn't know what The House is thinking. They're two independent agents operating on different slices of the same game state — which, if you think about it, is exactly how a real poker game with a friend whispering advice in your ear would work.

The Coach's architecture is worth calling out. It runs on a two-tier system: a deterministic math layer calculates the hard numbers — pot odds, hand equity, outs, hand strength — and feeds those results into the LLM coaching layer. The AI then takes that math and translates it into conversational, contextual advice. The key insight here is that the numbers are never hallucinated. Pot odds are pot odds. The math is classical code. The AI's job is to explain the math in a way that actually helps you learn.

Here's where it gets interesting from a software architecture standpoint.

If you've ever tried to build a poker opponent the traditional way, you know what you're signing up for. You need hand ranges for different positions. Pre-flop raising tables. Post-flop continuation bet logic. Bluffing frequency calculations. Bet sizing relative to pot and stack depth. Opponent modeling. The list goes on. We're talking hundreds of lines of carefully tuned conditional logic — a full-blown strategy engine — and even then, it plays like a robot.

With an LLM, all of that collapses into a prompt and a persona file.

The model already knows poker. It's read millions of poker strategy discussions, hand analyses, and tournament recaps during training. You don't need to teach it what a check-raise is or when to slow-play a set. You just need to give it the current game state, tell it what role it's playing, and let it make a decision.

Now, I want to be honest — this isn't going to beat a professional poker player. An LLM opponent won't play perfect game theory optimal poker. But that's not the point. The point is that it creates real, interesting decisions for a learner. It bets when it should. It folds when it's beat (usually). It occasionally pulls off a bluff that makes you second-guess yourself. For a learning tool, that's more than enough.

The key distinction here is what the AI replaces. It replaces the strategy engine — the decision-making brain. It does not replace the game engine. All the rules, the hand progression, the blind posting, the showdown logic, the chip accounting — that's all deterministic Java code, and it should be. You don't want an LLM deciding whether a flush beats a straight. You want it deciding whether to bluff the river.

Consistent with my workflow these days, I built the implementation with Claude. I described the architecture, the agent roles, the game flow, and Claude wrote the code. This has become my standard approach for AI Labs projects — I'm the architect, Claude is the builder, and we iterate fast using a proven workflow to limit hallucinations and ensure quality deliverables. If you've been following this blog, this should not surprise you at all.

The logo, though? That was ChatGPT's contribution. I needed a PNG with transparency — a poker-themed logo with character. The first attempt came back looking like clip art from a 2004 poker forum. After a few more attempts, I finally nailed it — the military-styled dealer character flanked by cards and chips. I will say this: getting AI image generation to produce clean PNGs with actual transparency is still harder than it should be. But the result speaks for itself.

The UI turned out to be the hardest part of the entire project, and it had nothing to do with AI. Poker is an information-dense game. You've got your hole cards, the community cards, chip stacks for both players, the pot, betting actions, a game log, and a coach panel — all of which need to be visible simultaneously. I tried vertical layouts. I tried tabbed interfaces on mobile. I tried collapsible panels. Every mobile-friendly approach I tried felt cramped or required too many taps to see critical information. Eventually, I made the call to go desktop-only for this proof of concept. A three-column layout — game log on the left, table in the center, coach on the right — gave everything room to breathe.

One of the design decisions I'm most proud of has nothing to do with how the AI works — it's about what happens when the AI doesn't work.

The entire AI layer is optional. A single configuration flag — app.ai.enabled — toggles it on or off. When it's off, The House becomes a simple random opponent with hardcoded probability thresholds, and The Coach disappears entirely. But here's the thing: the app is still a fully playable poker game. You can sit down, play hands, and practice your game. It won't be as interesting without the AI opponents, but it works.

This was a deliberate architectural choice. The Spring beans for the AI services are conditionally created. The AI calls have timeouts and silent fallbacks. If Vertex AI goes down, if your credentials expire, if the model returns garbage — the game keeps going. The app doesn't go down with it.

I think this is an important pattern for AI-powered applications in general, and it connects back to the AI Labs philosophy: build things that work first, then layer AI on top. The AI should make the experience better, not be the thing that holds it together.

If you zoom out a little, the pattern here is more interesting than the specific game. You have one AI that plays and another AI that teaches. The game happens to be poker, but the architecture isn't poker-specific.

Chess, backgammon, bridge, even complex board games — anywhere there's strategy and incomplete information, you could apply the same dual-agent pattern. An opponent that adapts to your skill level and a coach that explains the reasoning behind the right move. The "AI on your side" model — where artificial intelligence isn't just an adversary but an active learning companion — feels underexplored to me. Most AI gaming projects focus on building the strongest possible opponent. I think there's something more valuable in building the most helpful possible teacher.

I'm not saying this is the future of game-based learning. But I do think there's a thread here worth pulling on.

Building The Nut reminded me why side projects matter. Not because they ship to millions of users, and not because they're technically perfect. They matter because every once in a while, you get to build something in a space you genuinely enjoy, and the learning just flows. I probably absorbed more about prompt engineering, agent architecture, and graceful degradation patterns building this poker tutor than I would have from any course or tutorial.

If you want to sit down at the table and see how you stack up against The House, give it a try. The Coach is standing by.

Now if you'll excuse me, I have cards to play.

–Jeremy

Thanks for reading! I'd love to hear your thoughts.

Have questions, feedback, or just want to say hello? I always enjoy connecting with readers.

Get in TouchPublished on March 09, 2026 in tech